Scope+

Issue: XXIII.4 July - August 2016Page: 16

Digital Citation

Authors:

Yu-Hsuan Huang, Tzu-Chieh Yu, Pei-Hsuan Tsai, Yu-Xiang Wang, Wan-ling Yang, Hao-Yu Chang, Yu-Kai Chiu, Tsai Yu-Ju, Ming Ouhyoung

Describe what you made. Scope+ is a pioneer prototype of an augmented reality (AR) microscope for surgical training and education purposes.

Briefly describe the process of how this was made. First, we had to build hardware similar to a surgical microscope that was suitable for AR applications. We modified the Cartesian motor system from an open source 3D printer (SciBot, a variant of the Prusa i3 with an acrylic frame) and replaced the extruder with a specially designed microscope module, which was composed of two industry cameras, one adjustable convergence structure, and a circular light. Second, surgeons usually use the foot pedal to adjust the parameters and the field of view during surgeries; therefore, we used the circuit from a flight joystick and transformed the signals into G-code format to control the functions. The system generates the corresponding interactions and virtual objects according to the image-processing results from the real-time video taken by the microscope module.

What for you is the most important/interesting thing about what you made? The most interesting challenges we faced were how to get the proper stereoscopic video in a microscopic world and how to match the stereo virtual objects onto the real-world videos with acceptable latency and frame rate.

|

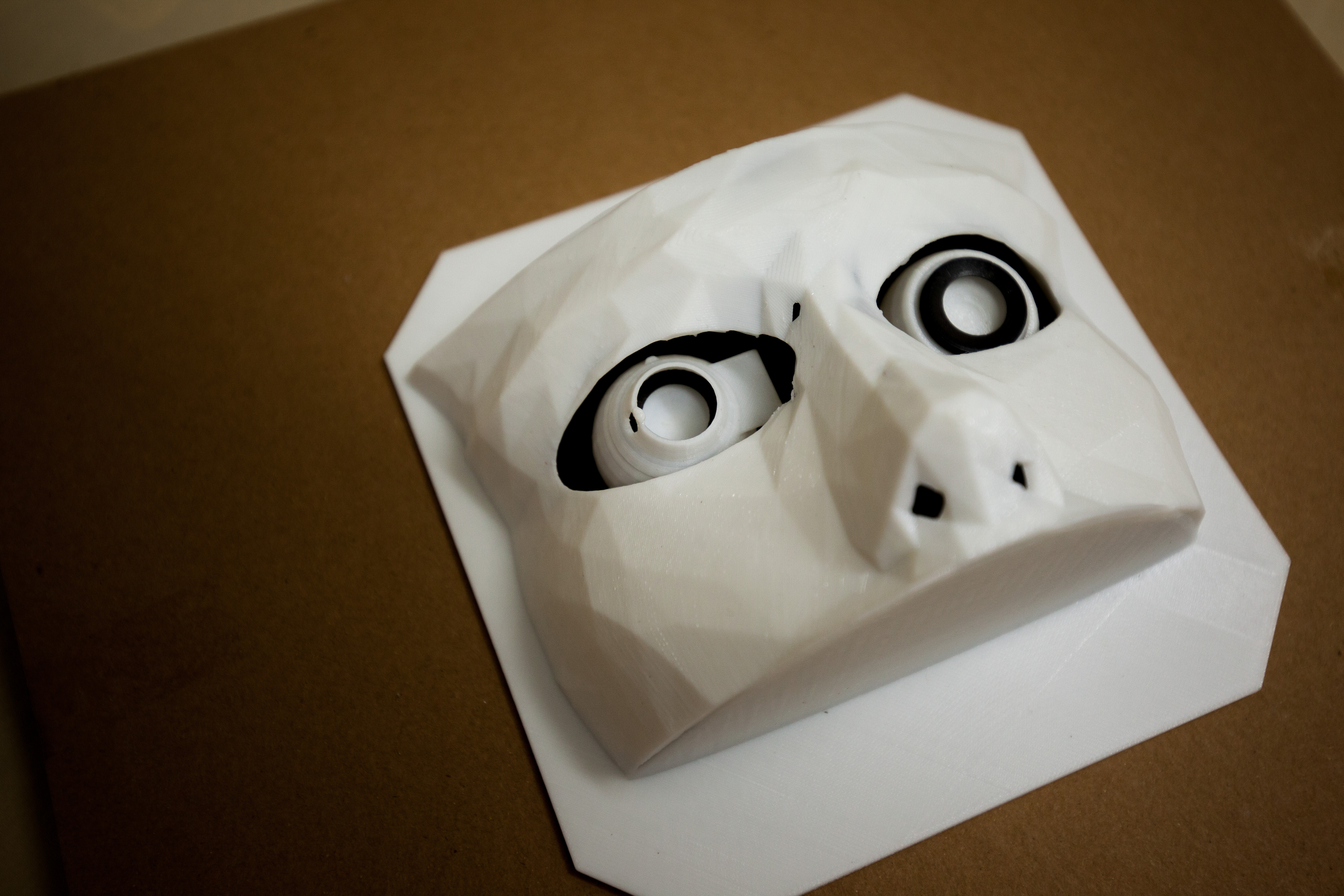

The first prototype of microscope module, which had no convergence structure inside. This one failed after a few minutes' testing. |

Was this a collaborative process, and if so, who was involved? This was a collaborative process involving eight computer science students. Two of them are undergraduates and five are master's students. The last one is our project leader, who is a Ph.D. candidate in computer science and also an ophthalmologist in Taiwan.

|

The fourth prototype of the microscope module with a stronger acrylic case and two adjustable mirrors in the middle of the cameras. |

What expertise (skills and competences) did it require? For the software development, we needed knowledge of image processing, computer graphics, rendering, and GPU computing. For the hardware design and manufacturing, we had to not only study optics and the physiology of the eye, but also know how to draw three-view diagrams for the laser cutter, how to create models for 3D printing, and how to solder for circuit board assembly.

|

The first prototype of Scope+, which could provide correct microscopic stereo AR vision and simple interactions, was completed in six long days. |

What materials and tools did you use? The main structure is composed of acrylic pieces made by the laser cutter. The motor system has four stepper motors controlled by an Arduino. The microscope module has two industry cameras, one adjustable convergence structure, and one circular light inside. Some of the small components in the microscope module are 3D printed with polylactide filaments. The circuit board, buttons, and analog joystick of the foot pedal are modified from a consumer flight joystick. The case of the foot pedal is also made of customized acrylic board. To build the digital eyepiece of Scope+, we removed the posterior part of the Oculus Rift DK2 and fixed it on a metal arm with three joints.

|

The final product of the foot pedal and the flight joystick we used inside. |

Did anything go wrong? A few things. There was no convergence structure in the first version of microscope module, and we found that the images from the two cameras could not be fused into a stereo image at such a short observing distance.

|

Filming in an ophthalmology clinic. |

We used a cheap consumer mirror in the first version of the adjustable convergence structure, so the image-feature detection rate was severely limited due to the ghost image reflected by its front glass surface.

|

View of all the components in the eye-surgery model. Most of the components are 3D printed. |

We also used the wrong function to read continuous images from the industry camera in the beginning; the frame rate was about 20 times faster after we fixed this bug.

|

The final product before the VR Village demonstration at SIGGRAPH 2015. |

Finally, initially we choose OpenGL as the programing language to develop the system. This language allowed us to find problems faster and have more flexibility; however, the performance was not good enough and the progress was slow when we were doing almost everything by ourselves. After cautious evaluation and struggling for two months, we decided to rewrite the entire system using Unity and its plugins.

What was the biggest surprise in making this? Because we had no prior experience with motors, the biggest surprise was the difficulty in controlling the stepper motors to rotate smoothly at different speeds and instantly move the microscope module to a different position. It took much longer than expected to solve this problem.

Was there anything new for you in the making, process, materials, or something else that you can tell us about? Because we adopted the motor system from a Cartesian 3D printer, Scope+ can be easily transformed into an FDM 3D printer by changing the microscope module back to an extruder. Users can have an AR scientific tool and a 3D printer at the same time.

What will you repeat in another project that you did well in this project? The adjustable convergence structure and the video see-through AR techniques will be used and improved in our next AR project.

What is the one thing about making this that you would like to share with other makers? To develop an AR system, you need to cooperate with experts in the fields of computer science, electrical engineering, mechanical engineering, optics, and physiology, which is tough and full of challenges but also fun. Fast prototyping is very important; don't hesitate to change the design or framework if needed after thorough experiments.

Yu-Hsuan Huang, National Taiwan University, [email protected]

Tzu-Chieh Yu, National Taiwan University

Pei-Hsuan Tsai, National Taiwan University

Yu-Xiang Wang, National Taiwan University

Wan-ling Yang, National Taiwan University

Hao-Yu Chang, National Taiwan University

Yu-Kai Chiu, National Taiwan University

Tsai Yu-Ju, National Taiwan University

Ming Ouhyoung, National Taiwan University

Copyright held by authors

The Digital Library is published by the Association for Computing Machinery. Copyright © 2016 ACM, Inc.