Authors:

Mark Baskinger, Mark Gross

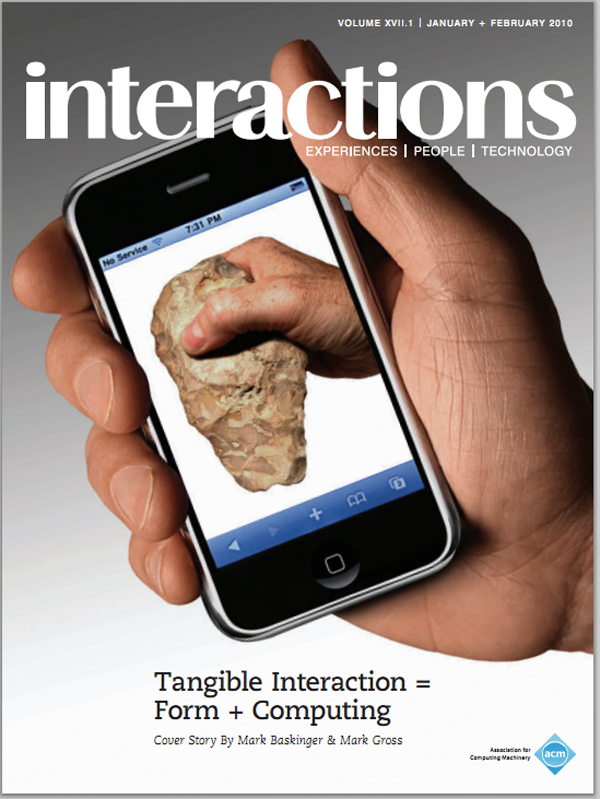

Interaction design melds traditional methods and approaches from other established disciplines. Many immediately think of digital technology or software, but the concepts of "interaction" are deeply rooted in classical industrial designproducts are designed to actively engage people and mediate their relationships with systems, activities, information, and with each other. Today interaction design includes services, systems, and strategic planning and reflects core principles of human-system/human-object interaction. Within the diverse landscape of interaction exists a specialized area where physical form and computing combine to yield new paradigms of interaction. This area, "tangible" interaction design, broadens scope, relevance, and application, linking interaction…

You must be a member of SIGCHI, a subscriber to ACM's Digital Library, or an interactions subscriber to read the full text of this article.

GET ACCESS

Join ACM SIGCHIIn addition to all of the professional benefits of being a SIGCHI member, members get full access to interactions online content and receive the print version of the magazine bimonthly.

Subscribe to the ACM Digital Library

Get access to all interactions content online and the entire archive of ACM publications dating back to 1954. (Please check with your institution to see if it already has a subscription.)

Subscribe to interactions

Get full access to interactions online content and receive the print version of the magazine bimonthly.

Post Comment

No Comments Found